Opening bars again are composed by Sarah Shugarman, with a sample at the start of a Nagra IV-S starting up, and the extro music is Paradigm Shift by Gavin Luke.

Hi folks, diving into the fast moving world of AI Art and the impacts and reactions in the art world again here. Came up a lot in my feeds the last two weeks and I’ve seen some things in that mix I didn’t like from all quarters.

The idea we get to decides who is or isn’t an artist or have any business threatening people who are using these tools with being punched are just not ok for a long list of reasons. Not even great venting ‘jokes’.

I talk a bit in this as part of the whole, about how the art that was scraped unethically, also isn’t still present in the operating AI directly, but rather what it’s ‘learned’ from the training period when it did have access to the training data including that art.

Dosen’t expunge the developers from the issues of that overreach, but it’s also what’s going to complicate going after them legally, and why it’s not as simple as straight forward plagiarism.

I talk about that and I did a bunch here on FB too if you want to read the seeds of some of what I talk about here. I also mention Jodo's Tron, a set of images generated by Johnny Darrell.

Since recording this someone pointed this thread out to me, from Craig Richardson and I wanted to note that but also say his key summery that “it can be described as a form of encoding or compression” which is central to his case, is VERY handwavy and not really correct as I’ve understood this stuff.

If data compression also included putting all the data about one image, in a box with hundreds of others and shuffling it up somewhat dramatically to the point that retrieving one specific image intact is now not really possible, then ok, sure? I’d call that data corruption if compression was the goal but not really an accurate summery IMO, more biased framing to make a case than strictly correct.

Course I’m not an expert myself so I’m not picking any of these hills to die on, but really does not sound like how this all works from the way I’ve understood that, from well before it became an AI Art issue.

I think these next three videos are well worth your time. An interview with Dave McKean, and two from PROKO all about the questions and challenges and ethics of AI art.

So I had collected a lot of free credits on Night Café - I use it for my current occasional experiments because it’s free and I’m not at least giving Stability AI money directly. But wanted to try something with seed images and lots of iterations.

I uploaded this photo of me at my messy desk…

…and this image from Bloom a wordless horror scifi short comic I’m working on…

And this, was my prompt text and settings for the AI…

"An artist being made redundant by AI, hyper detailed, fantastical, cyberpunk, photorealistic, textural, gritty, sci-fi, Jodorowsky, surrealist, technology, blue and Ochre pallet, mostly warm colors, Professional photography, bokeh, wide angle, natural lighting, canon lens, shot on dslr 64 megapixels sharp focus"

Weight: 1 ; Initial Resolution; Medium; Runtime - Medium; Seed - 609707; Overall Prompt Weight 60%; Noise Weight 60%; Model Version Stable Diffusion v2.0 (768px); Sampling method K_DPM_2_ANCESTRAL; CLIP Guidance FAST.

So I had the noise and prompt weight at 60% and was trying to see what would happen when I put those parameters in competition.

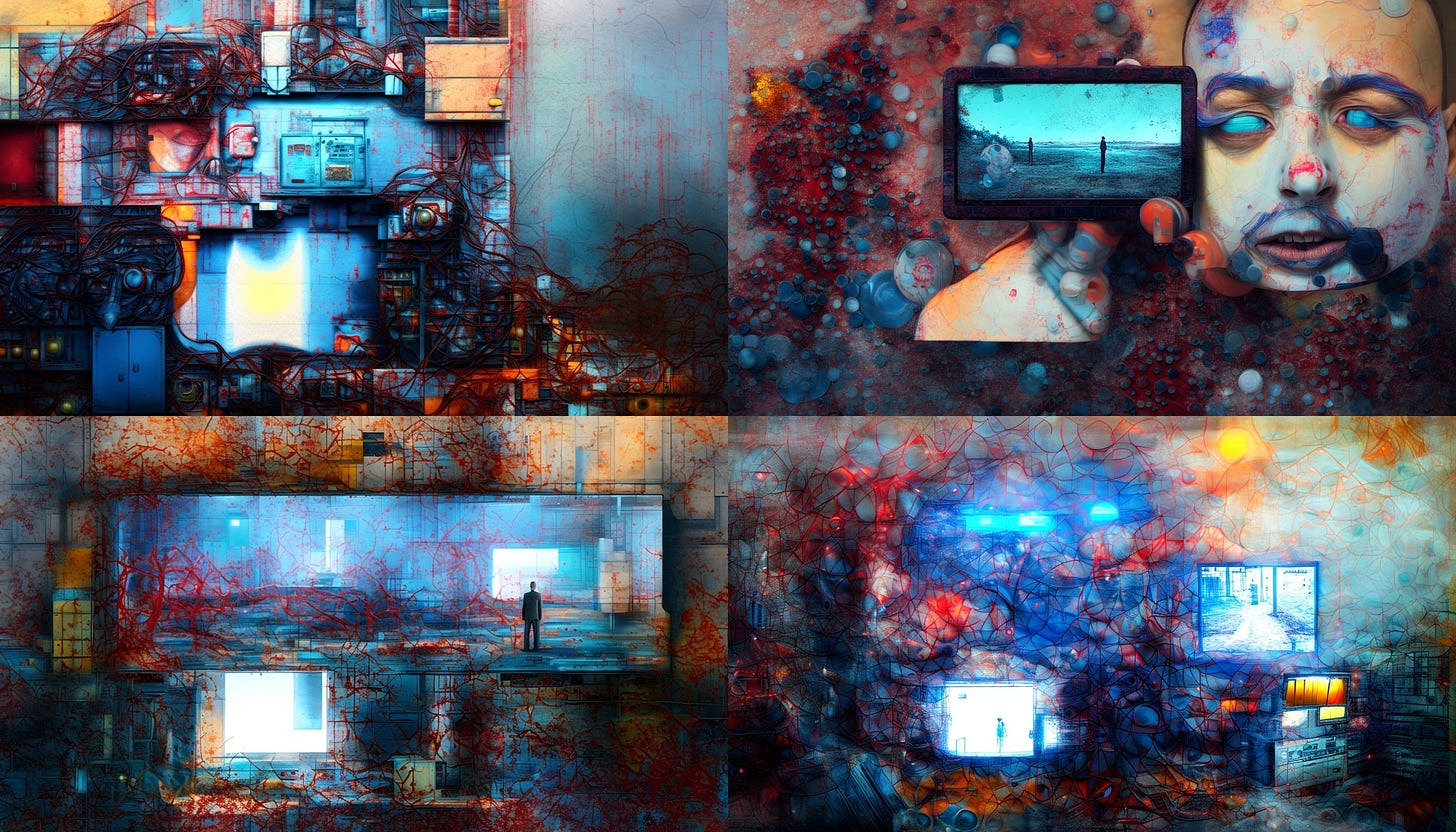

I use a lot of credits to maximize some of the parameters and here’s the results! It’s interesting stuff, you can see the way my two images informed two sets of images here…

A few of these upscaled to be something, intriguing. It’s fascinating how it picked up on my screens and the desktop art in this one and interpolated that into something where I can see it echoing the placement of things in the picture plane, the web of textures from my drawing, the values from my photo, but everything is something different from my seeds. From references in the prompt text and the noise setting being highish.

But note re the idea it’s just a form of data compression at it’s core, how neither of my seed images are really here, they have informed compositional directions it took, but especially with the noise set to over half, it’s far more a blending of influences. ED: Meant to underline that this highlights an aspect of ‘fitting’, what they call the AI adhering or not to the things it has in its database from the training data images.

Overfitting is when it does things like recreate watermark shapes or aspects of images that are too close for legal or ethical comfort to the training data. But by introducing more noise I was trying to see if that pushed it away from those things. It’s hard to be sure but I doubt anything we see here is really representative of any single image in the training data at this stage, but rather very much a synthesis of all that and my seeds directing the new renders.

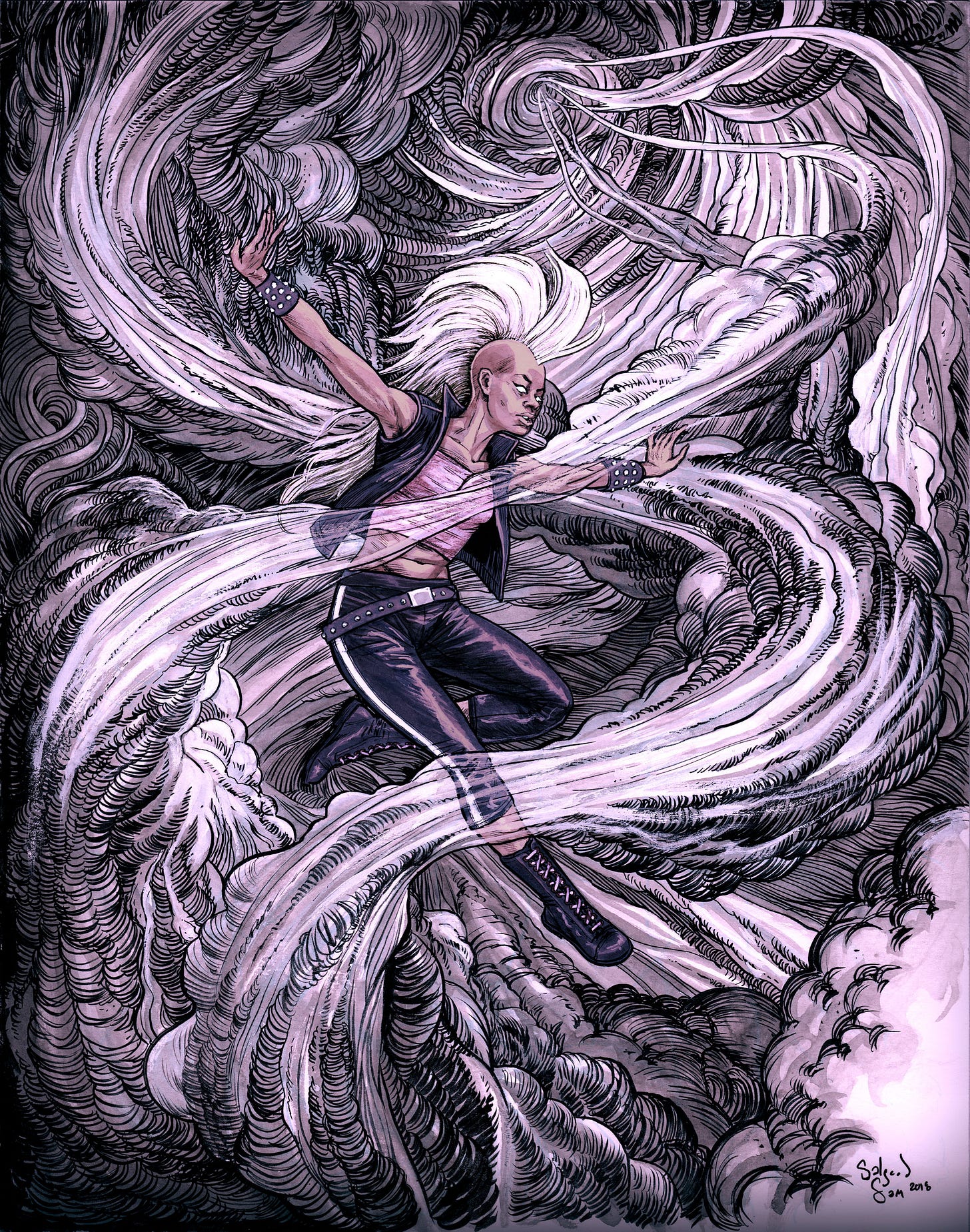

ED: OH, I forgot to add my Mohican Storm Commission art!

Mentioned this in the show as me applying everything I learned about drawing clouds from hours of study of BWS’s cover for UNCANNY X-MEN # 198 1985 MARVEL STORM FORGE LIFEDEATH II for hours on end as a teenager. It’s also influenced by Eleuteri Serpieri, who’s art I had in some copies of Heavy Metal and made for a lot of late night study as well.